Monitoring of food intake and eating habits is important for managing and understanding obesity, diabetes, and eating disorders. It can be cumbersome and tedious for individuals to self-report their eating habits, making wearable devices that automatically monitor and record dietary habits an attractive alternative. The challenge is that these devices store or transmit raw data for offline processing. This is a power-consumptive approach that requires a bulky battery or frequent charging, both of which intrude on the user’s normal daily activities and thus make the devices prone to poor user adherence and acceptance.

In this paper, we present a novel analog integrated circuit long short-term memory (LSTM) neural network for embedded eating event detection that eliminates the need for a power-consumptive analog-to-digital converter (ADC) in devices. Unlike previous analog LSTM implementations, our solution contains no internal DACs, ADCs, pampas or Hadamard multiplications. Our novel approach is based on a current-mode adaptive filter, and it eliminates over 90% of the power requirements of a more conventional solution. This opens up the possibility of unobtrusive, battery-less wearable devices that can be used for long-term monitoring of dietary habits.

You can find this paper along with other publications from the Auracle group on Zotero.

Odame, Kofi, Maria Nyamukuru, Mohsen Shahghasemi, Shengjie Bi, and David Kotz. “Analog Gated Recurrent Neural Network for Detecting Chewing Events.” IEEE Transactions on Biomedical Circuits and Systems 16, no. 6 (December 2022): 1106–15. https://doi.org/10.1109/TBCAS.2022.3218889.

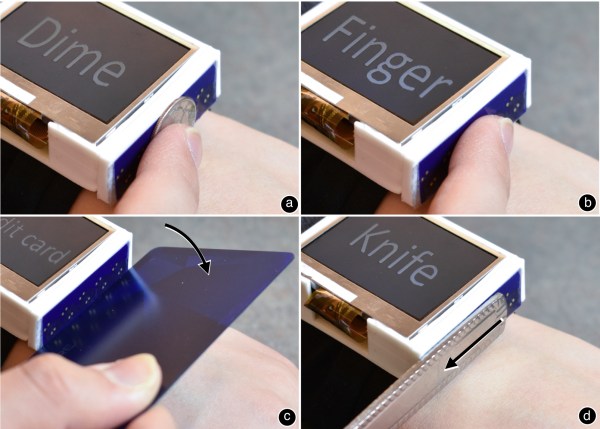

Abstract: We present Indutivo, a contact-based inductive sensing technique for contextual interactions. Our technique recognizes conductive objects (metallic primarily) that are commonly found in households and daily environments, as well as their individual movements when placed against the sensor. These movements include sliding, hinging, and rotation. We describe our sensing principle and how we designed the size, shape, and layout of our sensor coils to optimize sensitivity, sensing range, recognition and tracking accuracy. Through several studies, we also demonstrated the performance of our proposed sensing technique in environments with varying levels of noise and interference conditions. We conclude by presenting demo applications on a smartwatch, as well as insights and lessons we learned from our experience.

Abstract: We present Indutivo, a contact-based inductive sensing technique for contextual interactions. Our technique recognizes conductive objects (metallic primarily) that are commonly found in households and daily environments, as well as their individual movements when placed against the sensor. These movements include sliding, hinging, and rotation. We describe our sensing principle and how we designed the size, shape, and layout of our sensor coils to optimize sensitivity, sensing range, recognition and tracking accuracy. Through several studies, we also demonstrated the performance of our proposed sensing technique in environments with varying levels of noise and interference conditions. We conclude by presenting demo applications on a smartwatch, as well as insights and lessons we learned from our experience.